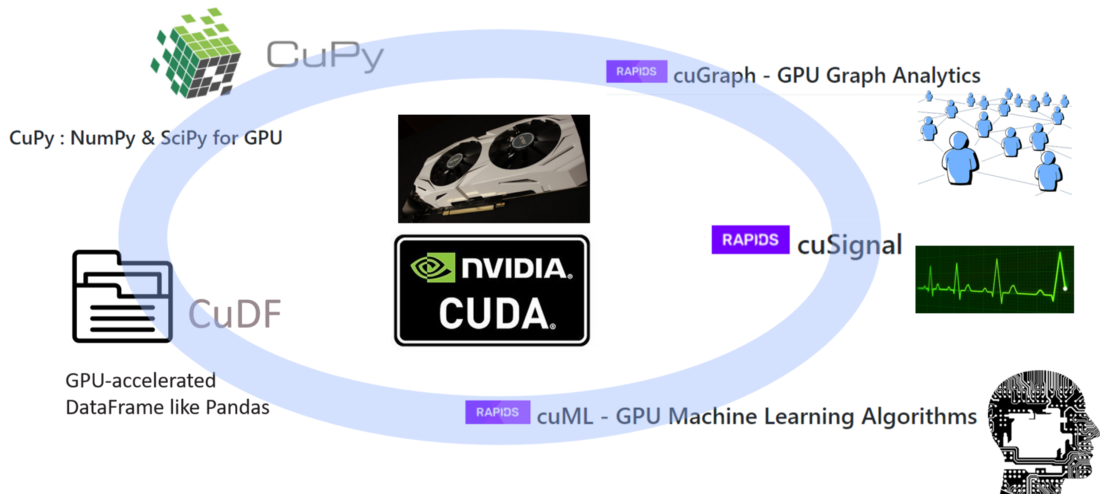

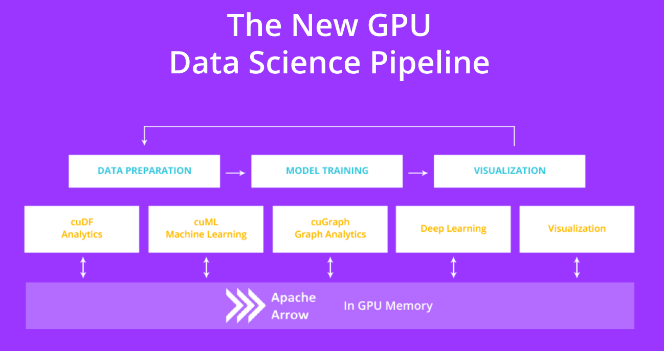

Más allá de Pandas y Apache Spark para la manipulación de datos: Apache Arrow y GPU - Blog de Hiberus Tecnología

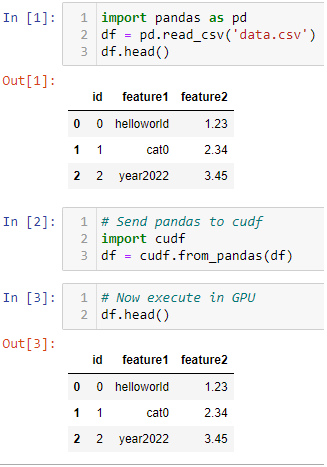

Gilberto Titericz Jr on Twitter: "Want to speedup Pandas DataFrame operations? Let me share one of my Kaggle tricks for fast experimentation. Just convert it to cudf and execute it in GPU

Pandas DataFrame Tutorial - Beginner's Guide to GPU Accelerated DataFrames in Python | NVIDIA Technical Blog

Pandas DataFrame Tutorial - Beginner's Guide to GPU Accelerated DataFrames in Python | NVIDIA Technical Blog

Python Pandas Tutorial – Beginner's Guide to GPU Accelerated DataFrames for Pandas Users | NVIDIA Technical Blog

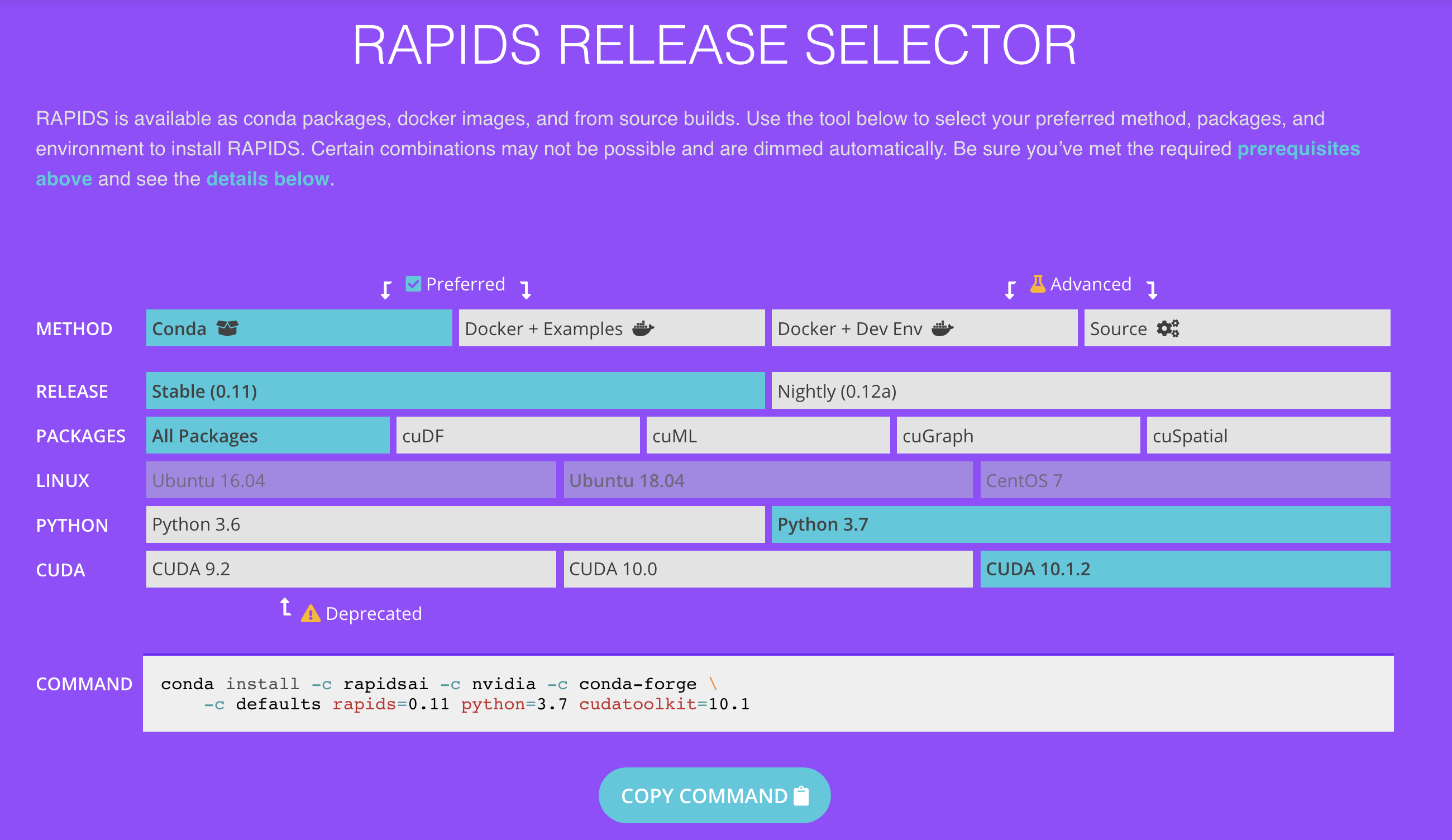

Speedup Python Pandas with RAPIDS GPU-Accelerated Dataframe Library called cuDF on Google Colab! - Bhavesh Bhatt

Using GPUs to run Pandas. When performing data-related… | by Onepagecode | Onepagecode | Oct, 2022 | Medium

Panda RGB GPU Backplate Custom Made for ANY Graphics Card Model now with Vent Cut Outs and ARGB (Addressable LEDs) - V1 Tech